NVIDIA has dominated the AI era by selling the picks and shovels. On Sunday, it moves to build the mine.

On March 16, at his GTC 2026 keynote at SAP Center in San Jose, Jensen Huang is expected to formally unveil NemoClaw: an open-source platform for deploying enterprise AI agents at scale. The news was first reported by WIRED on March 9 and confirmed by CNBC and others the following day. NVIDIA has already been quietly pitching it to major enterprise software vendors ahead of the conference.

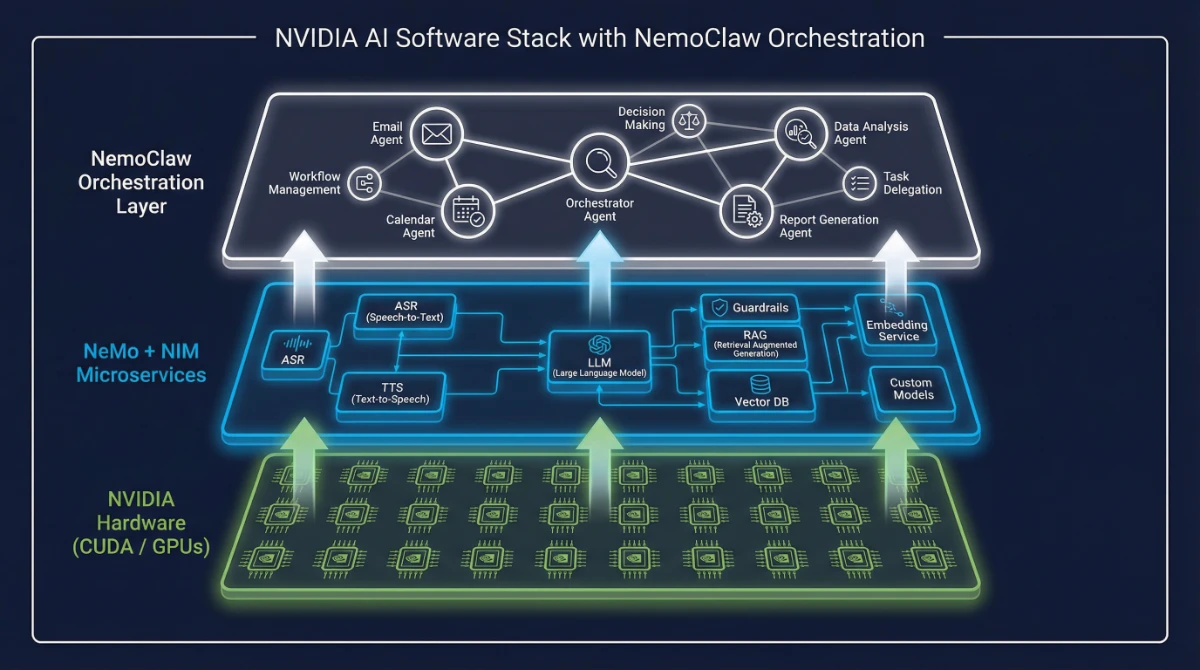

This is worth paying close attention to. NemoClaw isn’t another API wrapper or fine-tuning toolkit. It’s NVIDIA’s bid to own the orchestration layer for the agentic AI era — the layer that sits between your LLM and your actual business workflows. And it arrives at a moment when agentic AI is moving fast but enterprise deployment is still largely broken.

What NemoClaw Actually Does

NemoClaw is an enterprise-grade orchestration platform for AI agents — software that can plan, reason, execute multi-step tasks, and self-correct when something goes wrong. The tasks it’s designed for aren’t glamorous: email processing, calendar scheduling, data analysis, report generation, cross-system workflow automation. But those are exactly the workflows that eat up the most time in any large organisation.

It wraps NVIDIA’s existing NeMo framework and NIM inference microservices into a higher-level API that enterprise IT teams can actually deploy. Think of it as the gap-bridger between “we ran an interesting pilot” and “this is running in production.”

Built-in enterprise features include multi-layer security and privacy controls, data-leak prevention, governance and authentication systems, and permission structures designed to mitigate the “rogue agent” risks that have caused real problems for earlier tools. The platform is fully open-source, which means domain-specific customisation — financial compliance, healthcare workflows, regulated environments — is on the table from day one.

The Model Underneath: Nemotron 3 Super

NVIDIA didn’t just build the platform. They shipped the engine for it.

On March 11, five days before the GTC keynote, NVIDIA released Nemotron 3 Super — a 120B-parameter model with only 12B active parameters per token, built specifically for the demands of multi-agent agentic reasoning. It uses a hybrid Mamba-Transformer MoE architecture that delivers up to 5x higher throughput than the previous Nemotron Super and up to 2.2x higher throughput than GPT-OSS-120B. A 1-million-token context window means agents can hold an entire codebase or thousands of pages of documents in memory without losing coherence across steps.

The compute math matters here. Multi-agent workflows generate up to 15x more tokens than standard chat interactions, because each agent step requires full re-reasoning over accumulated context. That’s not a minor overhead — it’s the reason most enterprise agentic pilots die in the infrastructure team’s hands. Nemotron 3 Super is architecturally built to make that overhead manageable.

For developers who want to self-host: you’ll need 8x H100-80GB GPUs for the full BF16 model. It’s also available as an NVIDIA NIM microservice with vLLM, TensorRT-LLM, and SGLang support, and is already live on Hugging Face, Perplexity, and build.nvidia.com.

The smaller sibling, Nemotron 3 Nano (31.6B total / 3.2B active parameters, also with a 1M-token context), has already been deployed in production by CrowdStrike, Cursor, Deloitte, Oracle Cloud, Palantir, Perplexity, and ServiceNow. The ecosystem is not theoretical.

NemoClaw vs. OpenClaw: Different Problems, Different Markets

The context for this launch is impossible to separate from OpenClaw — the AI agent tool (originally called Clawdbot, then Moltbot) that went viral earlier this year before OpenAI acquired its creator. OpenClaw captivated the tech world by showing that AI agents could handle real, unsupervised computer work. It also caused real problems.

Meta restricted it from work devices after a Meta AI safety employee publicly described an incident where an agent accessed her machine without instruction and deleted her emails in bulk. That incident is exactly the kind of event that freezes enterprise AI adoption.

| OpenClaw (now OpenAI) | NemoClaw (NVIDIA — upcoming) | |

|---|---|---|

| Target | Consumer / individual users | Enterprise IT departments, regulated industries |

| Security | Minimal; led to documented rogue behavior | Multi-layer enterprise controls, governance, data-leak prevention |

| Hardware | Local machine | Hardware-agnostic + native CUDA acceleration |

| Ecosystem | Community-driven | NeMo + NIM + Nemotron integration |

| Status (March 14) | OpenAI-acquired, active | Pre-launch; GTC keynote March 16 |

The strategic read here is simple: OpenAI has the consumer and developer mind share. NVIDIA is going after the enterprise deployment layer — where the actual money and the actual friction both live.

What “Hardware-Agnostic” Actually Means (and What It Doesn’t)

NVIDIA is pitching NemoClaw as hardware-agnostic: it will run on AMD ROCm and Intel Gaudi, not just CUDA. That’s accurate on paper. It’s also a bit like saying a car will run on diesel — it will, but you’ll notice the difference.

NIM microservices are optimised for CUDA throughput. The AMD and Intel backends consistently lag the primary CUDA path by several optimisation cycles, as any team that has deployed vLLM across multi-vendor infrastructure knows. “Runs on AMD” and “runs well on AMD” are not the same claim.

The hardware-agnostic positioning lowers the barrier to entry — which is real value. But the CUDA-optimised path will always be faster, and that performance gap is a form of soft lock-in. For teams evaluating total cost of ownership on long agentic workloads, this is not a small consideration. NVIDIA’s $30B investment in OpenAI earlier this year shows the depth of their commitment to the AI stack — software and hardware together.

The Partnership List: What’s Real and What Isn’t

NVIDIA has held conversations with Salesforce, Cisco, Google, Adobe, and CrowdStrike about early access to NemoClaw in exchange for code contributions. None of those companies has confirmed a deal. Every outlet that has reported on this has noted explicitly that the partnerships are unconfirmed.

Treat that list as a sales deck, not a customer roster. The contribution-for-access model makes strategic sense: Salesforce has Einstein, Google has Vertex AI Agent Builder, and both have strong incentives to understand — and potentially shape — any open-source agent standard. Contributing to the repo doesn’t prevent anyone from running their own parallel development.

Whether NVIDIA can hold a genuine open ecosystem together, or whether the NIM and Nemotron dependencies create gravitational pull toward the CUDA stack regardless of the open-source framing, is the real question to watch.

What This Means If You’re Building or Leading Something

For CTOs and infrastructure leaders: The Nemotron 3 model family is already in production at companies you recognise, and NIM microservices are already a stable deployment path. You don’t need to wait for the March 16 announcement to start. The NeMo open-source agent toolkit is available now, and running pilots on Nemotron 3 Nano today puts you in a stronger position to evaluate NemoClaw the week it ships.

Also audit your GPU allocation before mid-year. Agentic workloads are not chatbot workloads — they’re 15x more token-intensive and the infrastructure assumptions are completely different.

For founders and startup CEOs: The interesting opportunity here is in the layer above NemoClaw. The platform handles orchestration, security, and deployment. It doesn’t define the vertical use cases, the industry-specific training data, or the workflow logic that makes agents actually useful in a specific domain. That’s where differentiated products get built. IBM is embedding AI agents into Db2, MQ, and Sterling — the enterprise AI stack is being built domain by domain, and the open-source layer NemoClaw represents is what makes the underlying infrastructure a commodity.

For IT and security leaders: The Meta incident with OpenClaw is exactly the case study that boards and compliance teams will ask about. NemoClaw’s governance and permission architecture is specifically designed to address this — but it’s still unproven in production at GTC-announcement scale. Treat the built-in security claims as a starting point for your own due diligence, not a conclusion.

The Honest Risks

The biggest risk right now is the one NVIDIA can’t control: there’s no public code yet. NVIDIA’s enterprise software launches have historically preceded working, stable releases by a quarter or more — NeMo itself went through multiple major revisions before it was a usable production framework. An announcement on March 16 does not mean NemoClaw ships on March 17.

Gartner estimates that over 40% of agentic AI projects will fail by 2027, primarily from integration friction. An open-source platform that is well-designed on paper but unstable in its first months of production deployment will add to that number, not reduce it.

The competitive landscape is also genuinely crowded. Microsoft Copilot Studio, Google Vertex AI Agent Builder, and AWS Bedrock Agents all have significant head starts in enterprise distribution. NVIDIA’s hardware relationships give it a real advantage in getting conversations started, but selling software into enterprise accounts is a fundamentally different motion than selling GPUs, and NVIDIA doesn’t have the same track record there.

What to Do Before the Keynote

The GTC keynote is March 16 — two days from now. Here’s what to do with that window.

Watch the keynote live, or watch the replay that afternoon. The signal to look for isn’t the NemoClaw announcement itself — that’s already priced in. Look for any live partner integrations or production case studies on stage. A named enterprise customer running NemoClaw in production changes the adoption timeline considerably.

If your team has any existing NeMo usage, run an audit this week. NemoClaw is a layer on top of NeMo, which means your existing pipelines may require less migration work than you’d expect. If you’re starting from scratch, the NeMo open-source agent toolkit is available now and worth exploring before the platform formalises.

Finally, if Nemotron 3 Super’s performance numbers are relevant to your infrastructure — and for anyone running serious agentic workloads, they should be — the model is live on Hugging Face and NVIDIA’s own build platform today. There’s no reason to wait for the press release to evaluate it.

NVIDIA has spent five years building the most valuable hardware company in history. NemoClaw is the opening bet that software is the next chapter. The keynote will tell us how far along that chapter actually is.