There’s no clean playbook for financing compute at the scale that 2026 requires. So Mistral borrowed $830 million from seven banks. Meta sextupled a Texas data center budget in five months. Oracle laid off potentially 30,000 people to pay for GPU racks.

Three companies. Three different improvised financing models. All of them responding to the same constraint: the infrastructure agentic AI requires costs more than anyone planned, and there isn’t enough time to wait for the balance sheet to catch up.

What makes the final week of March 2026 worth examining isn’t each move in isolation. It’s what they reveal when read together — including a story that most outlets covered as three separate items.

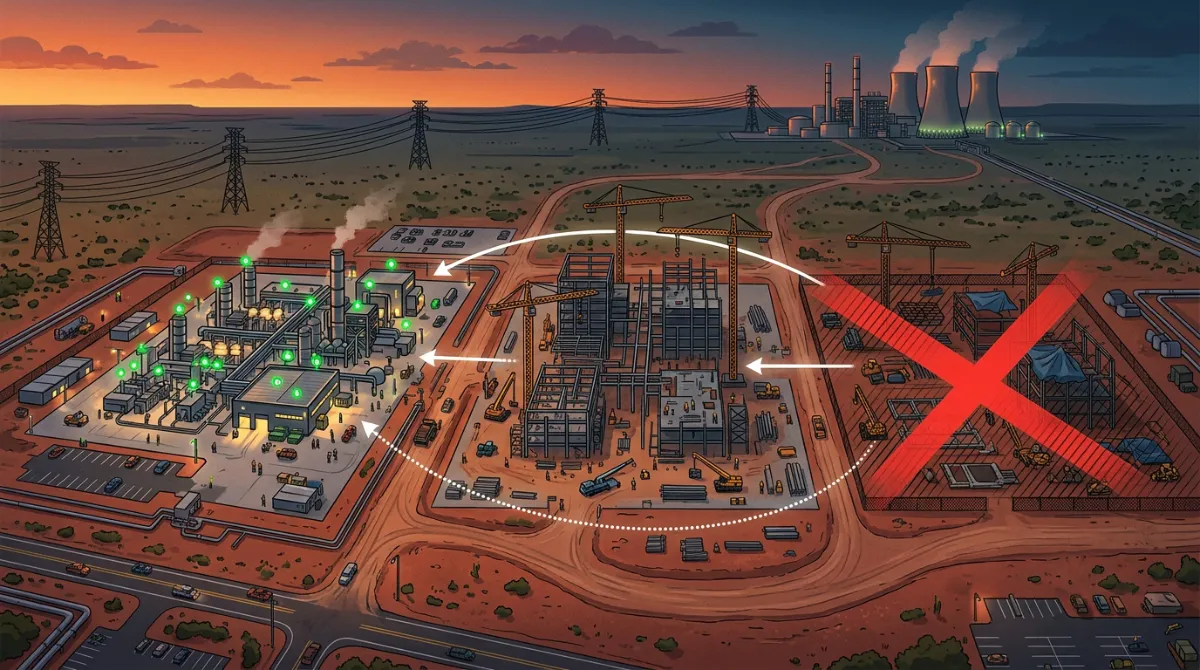

The Abilene Collapse Nobody Connected to the Other Two Stories

On March 6, Bloomberg reported that Oracle and OpenAI scrapped plans to expand the Abilene, Texas Stargate data center after negotiations broke down over financing and OpenAI’s changing compute needs. The collapsed talks created an opening for Meta to step in and consider leasing the planned expansion site from developer Crusoe — with Nvidia facilitating Meta’s discussions.

The existing 1,000-acre Abilene campus remains unaffected. Two buildings are already running training and inference workloads on Nvidia GB200 Blackwell racks, with six more scheduled for completion by mid-2026, bringing total capacity to roughly 1.2 gigawatts. What collapsed was the separate, never-finalized 600MW expansion lease.

The reason for the collapse matters. Building 600 megawatts of Blackwell capacity at a site where the power grid won’t be ready until after Nvidia’s next-generation Rubin architecture ships means paying for hardware that is a generation behind before the buildings go live. Rubin is set to be superseded by an Ultra variant in 2027, with the Feynman generation coming in 2028. OpenAI chose to redirect that capacity elsewhere.

This is the hidden connective tissue in the March 26-31 announcements. Three weeks after Oracle and OpenAI walk away from the Abilene expansion, Meta announces a $10 billion Texas site, with Nvidia reportedly in the room brokering Meta’s access to the Crusoe site Oracle vacated. Oracle, meanwhile, is cutting thousands of employees to finance the Stargate commitments it still has. And Mistral is building in France specifically because neither of them is building there.

Nvidia is no longer just a chip vendor. It is an infrastructure broker, actively reshaping who gets capacity and where.

Mistral’s $830M: The Debt Model for AI Sovereignty

Mistral has raised €2.8 billion in equity since its 2023 founding. For its data center, it went to the debt markets — closing an $830 million facility from a consortium including BNP Paribas, Crédit Agricole CIB, HSBC, and MUFG to build a data center in Bruyères-le-Châtel, outside Paris, targeting operational status by end of Q2 2026. The company aims to reach 200 megawatts of compute capacity across Europe by 2027.

CEO Arthur Mensch framed it as a sovereignty play: governments, enterprises, and research institutions want to build AI environments without depending on third-party cloud providers. That’s the pitch to the banks, and clearly it worked.

This is a meaningful template. Debt financing makes sense for infrastructure with long-term contracted revenue — the same logic that backs utilities, toll roads, and fiber networks. For a four-year-old AI startup to access institutional debt at this scale, European banks have had to conclude that sovereign AI infrastructure is creditworthy. The political context — the EU AI Act, procurement preferences for European cloud, growing sensitivity about data routing to U.S. jurisdictions — is doing real work in that credit decision.

If you’re building AI products in regulated European markets, Mistral’s data center matters to your vendor roadmap. It’s not charity compute. It’s an infrastructure bet with a specific customer thesis: enterprises and governments who cannot or will not use AWS or Azure for their AI workloads.

Meta’s $10 Billion Texas Pivot

Meta announced on March 26 that it was increasing its El Paso, Texas data center investment to $10 billion — more than sixfold from the $1.5 billion committed when it broke ground in October 2025. The facility targets 1 gigawatt of capacity ahead of its 2028 opening.

The company confirmed over 300 operational jobs at completion and more than 4,000 construction workers at peak. The facility uses a closed-loop, liquid-cooled system that recirculates water and will consume zero water for most of the year.

The sixfold cost increase in five months is not a budget overrun. Meta’s 2026 capital expenditure guidance was already up to $135 billion. In February it signed major chip deals with Nvidia and AMD, and committed to becoming the first customer for Arm’s new data center processor. The hardware pipeline was already locked in. The El Paso expansion is the site catching up to what the chip commitments already implied.

And given the Abilene timeline, Meta now has reason to build fast. GPU generations are turning over faster than power infrastructure can follow. A site that doesn’t reach 1 GW by 2028 risks opening with chips that are already a generation old. That’s why Meta over-commits early and at scale. Better to spend $10 billion on a site that’s ready than $3 billion on one that opens too late.

Oracle: The Austerity Model

The Oracle situation is the most revealing of the three, because it shows what the infrastructure race looks like when the balance sheet can’t keep up.

Oracle began cutting employees on March 31, with workers in the United States, India, Canada, Mexico, and other countries receiving termination emails from “Oracle Leadership” at approximately 6 a.m., with no prior warning from HR or direct managers.

TD Cowen estimates the cuts could affect up to 18% of Oracle’s 162,000-person workforce and free up $8–10 billion in cash flow. Oracle disclosed a $2.1 billion restructuring plan in its March 2026 SEC filing.

Oracle has taken on $58 billion in new debt over the past two months, funding data centers in Texas, Wisconsin, and New Mexico, with total debt exceeding $100 billion. The company’s contracted future revenue tells a different story: remaining performance obligations stood at over $520 billion, up more than 400% year over year, driven by an agreement with OpenAI. This is not a company in revenue distress. It is a company that has pre-sold more cloud capacity than it can currently afford to build, and is cutting its human workforce to close the gap.

The business logic: Oracle’s core enterprise software revenue is structurally threatened by AI-native alternatives. Its only credible path to a higher multiple is becoming a compute-as-a-service provider to AI labs and hyperscalers. The Stargate contract with OpenAI is worth over $300 billion over five years. Oracle’s stock has still lost nearly 30% of its value this year, as investors weigh the scale of the infrastructure bet against the timeline to positive cash flow. The market doesn’t disagree with the strategy. It’s worried about the execution.

The Risk Nobody Is Pricing In

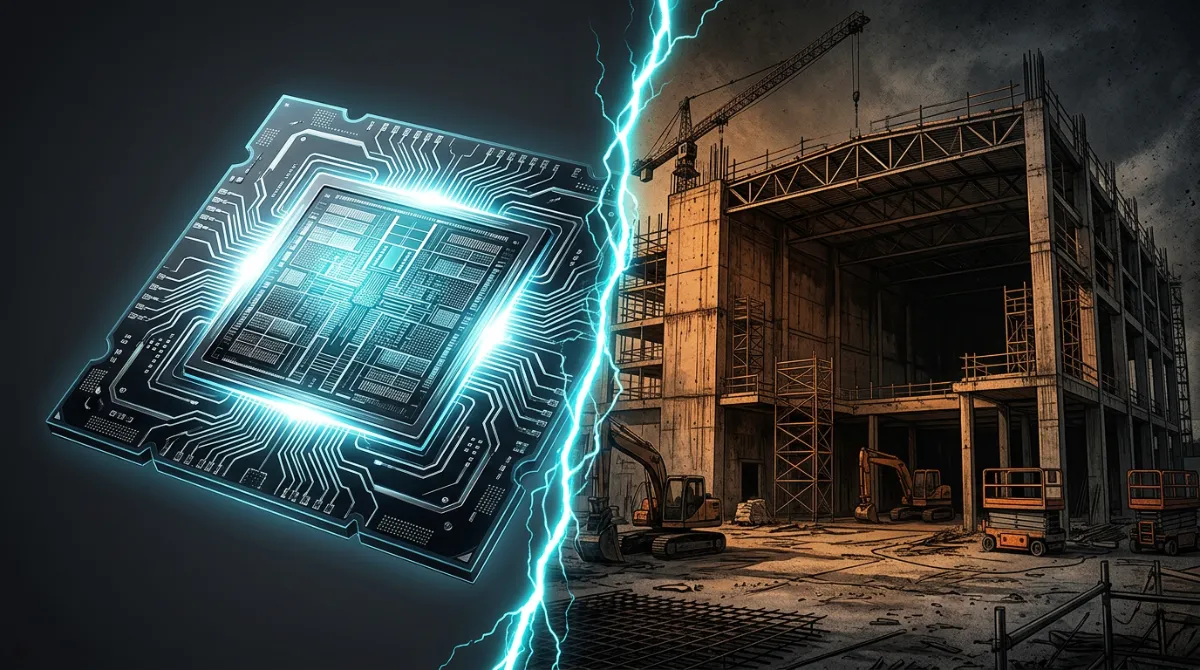

All three moves carry a version of the same structural risk: the gap between when you commit capital and when the hardware is profitable to run is getting longer, not shorter.

The Abilene collapse illustrated it precisely. Nvidia’s GPU generations are turning over faster than power infrastructure can follow. Building a data center site today means the compute it will hold at opening may already be one generation old. This has direct implications for anyone building or leasing AI infrastructure:

For hyperscalers, it argues for building sites as fast as possible — which is exactly what Meta is doing with El Paso’s 2028 deadline.

For cloud resellers like Oracle, it creates a chip timing problem. You’ve committed to a site. You’ve financed the hardware. The customer changes what generation they want. The financing was structured around the old generation. That dynamic is exactly what ended the 600MW Abilene expansion.

For smaller players like Mistral, speed matters less than locking in site control and energy agreements before the market gets more competitive. A 44MW site operational by Q2 2026 is a beachhead, not a finished position.

Multiple U.S. banks have already stepped back from data center lending, with interest rate premiums on data center lease financing reportedly doubling since September 2025. The capital market is beginning to price in execution risk on these bets. The companies still raising are finding it harder and more expensive than twelve months ago.

What Your Organization Should Do With This

If you’re a CTO or infrastructure lead: The Abilene timeline collapse is a direct lesson in vendor dependency risk. If your inference or training compute relies on a single cloud provider with a leveraged infrastructure buildout, build a contingency. Not because Oracle or anyone else will fail — but because financing constraints can alter which hardware generation you get access to, and when. Map your AI compute dependencies against your vendors’ debt exposure and chip generation timelines. It’s a due diligence question now, not a hypothetical.

If you’re a founder choosing cloud infrastructure: Mistral’s European buildout means you will soon have a credible, sovereignty-compliant alternative to U.S. hyperscalers for regulated European workloads. If your customers are in financial services, healthcare, or public sector in the EU, watch the Q2 2026 launch. It may unlock enterprise sales conversations you haven’t been able to have.

If you’re an enterprise software executive watching Oracle: The layoff-to-GPU reallocation model is coming to more enterprise software companies. Workday, SAP, Salesforce — any company whose core revenue depends on software categories AI will partially displace is making the same calculation Oracle made, just at different stages. When your vendors announce “strategic restructuring,” ask specifically where the cost savings are being redeployed. If the answer is infrastructure, your product roadmap is being deprioritized.

If you’re on a board or in a CFO seat: AI infrastructure has become a capital intensity question on par with semiconductor fabs or pipeline networks. The companies making $10 billion multi-year site commitments today are doing so because they believe 2027 capacity will be functionally unavailable at any price. Stress-test that assumption in your own planning — but stress-test the alternative too. The companies that wait for certainty on ROI before committing will find the supply is gone.

The Decision in Front of You

The current moment will look, in retrospect, like a consolidation window. The companies that secured sites, energy agreements, and chip allocations in 2025–2026 will have structural advantages in inference cost and availability through the end of the decade. The ones that treated infrastructure as a 2027 or 2028 problem are already behind.

That doesn’t mean everyone needs to spend $10 billion. It means knowing, concretely, where your AI compute is coming from in 2027 — who has committed to provide it, what their balance sheet looks like, and whether their hardware generation timeline matches yours. Most organizations don’t have that answer. Getting it is the first move.

For context on the compute financing dynamics behind these builds, see our earlier breakdown of OpenAI’s $110B raise and the Amazon partnership. For why infrastructure decisions are now inseparable from product strategy, see How Agentic AI Is Changing Enterprise Software in 2026.